At the beginning of the AI Large Language Model fad, I was playing around with the then hyped GPT-2. Besides the generic model, GPT-2 can also be fine-tuned with custom datasets to generate texts with specific styles. I tried different texts, from science fiction, to lectures from Buckminster Fuller, to poetry, and to my own WhatsApp chat history. The results are interesting but not quite convincing. When it comes to the modernist classic Ulysses by James Joyce, I was quite surprised, not at the generated GPT Ulysses, but (at first glance) how the original novel sometimes reads like it was generated by a mindless machine. Curious to see how others react to this, I invited 52 readers to read two randomly selected excerpts, one from Ulysses and one from GPT-2, without knowing which one is which. They were then asked to rate how much they appreciated each text and which one they think was written by James Joyce--inspired by the classic Turing test for evaluating artificial intelligence.

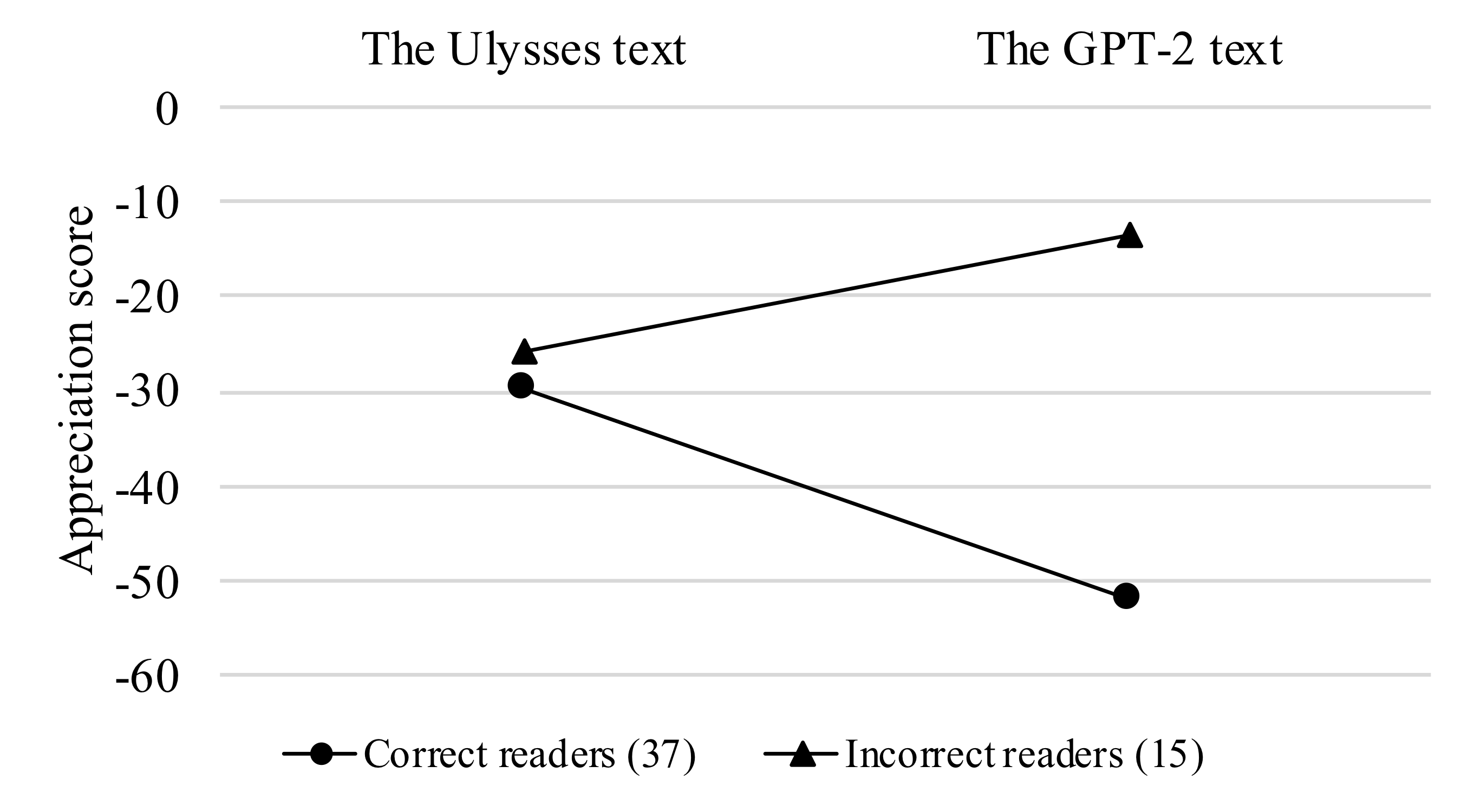

The results show that more than half of the readers (37) can correctly tell which text is generated by a machine. For both groups of readers, the text they appreciate better is considered by them to be from a literary classic, regardless of its origin. It is interesting to notice that for Ulysses, both correct and incorrect readers rated it similarly, while for the GPT-2 text, there is a clear difference between the two groups of readers, despite that both texts are rated negatively by all readers.

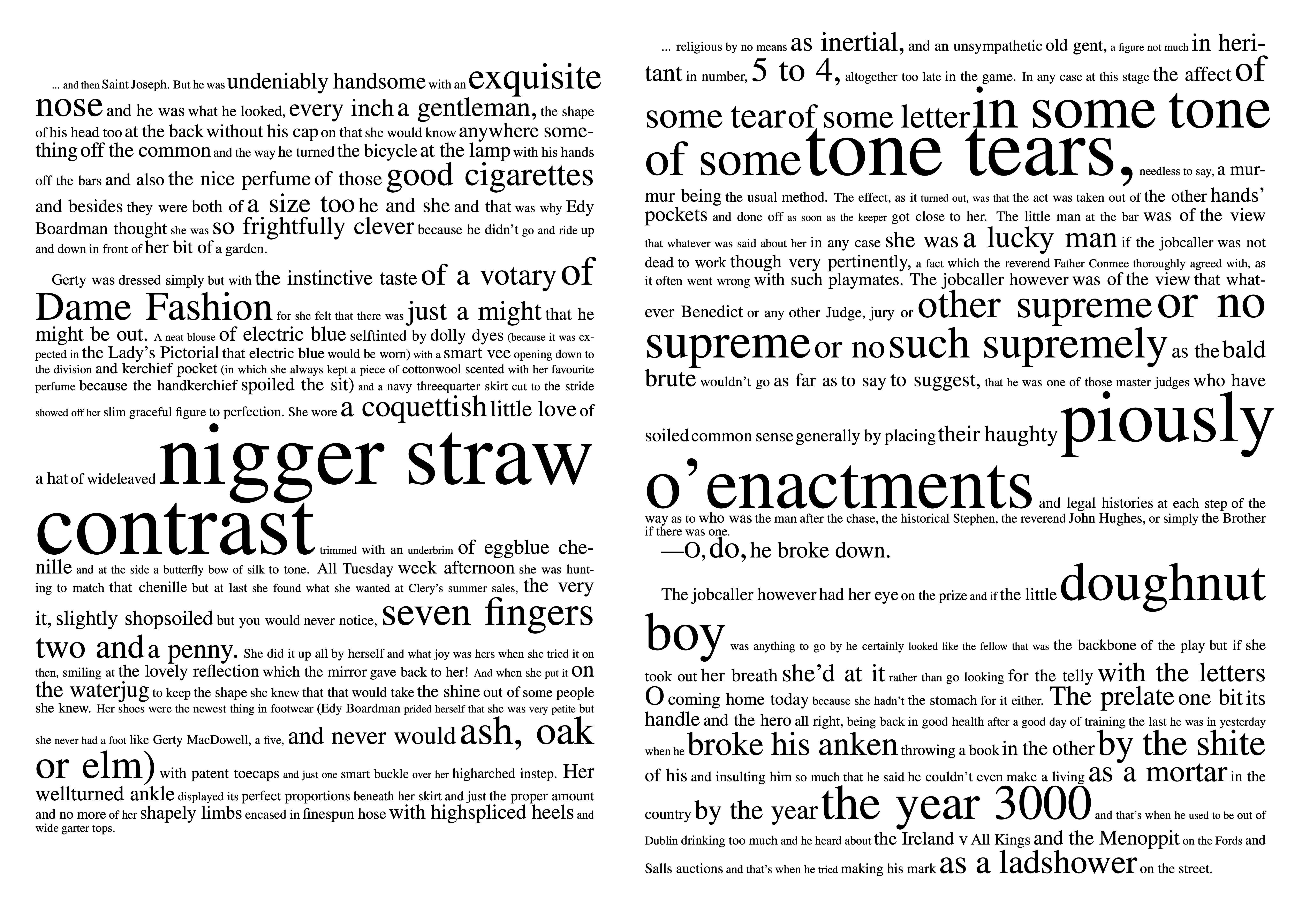

I also asked the readers to highlight in the text, the phrases they found striking, which is often used as a measurement for literariness. The results are visualised as font sizes (left: Ulysses, right: GPT-2):